An end-to-end view

1- Abstract

In the rapidly evolving landscape of software development, performance management plays a crucial role throughout the entire lifecycle of software projects. This paper emphasises the importance of continuous performance management from initiation to delivery and ongoing maintenance, showcasing its integral role in achieving project objectives, enhancing software quality, and ensuring customer satisfaction.

2- Introduction

Traditionally, performance management in large software projects is treated as an afterthought. The project progresses through its normal development cycle, and at a certain stage—usually after completing several development cycles—load and performance tests are conducted to determine if the system meets the “performance barrier.” This barrier assesses whether the current performance levels of the system are satisfactory for meeting user or client needs. If all goes well, the project advances to its next stages.

However, it’s unlikely for a large system to pass performance testing without issues. This leads to a few possible scenarios:

- Tuning Performance Problems: This is probably the most common scenario. Detected performance issues often stem from a lack of performance-oriented coding practices and tools and must be addressed by carefully analyzing performance bottlenecks and retesting.

- Structural Performance Problems: These issues are often the most challenging to resolve and typically originate from poor architectural design, inadequate tool and framework selection, or unsound software engineering practices.

- Hardware Performance Problems: This occurs when hardware sizing has been inappropriately planned, requiring either a scaling down of performance targets or the procurement of hardware upgrades.

It’s also common for performance testing to be neglected, with problems only emerging after the system goes live. In such cases, operations and development teams must enter “firefighting” mode to manage the situation as effectively as possible.

Any of these scenarios is far from ideal, potentially leading to serious delays, increased costs, frustrated customers, and unusable systems. The severity of the impact depends on the type of performance issue encountered.

For example, identifying a structural performance problem at this stage could take months and significant resources to resolve, putting the entire project at risk.

It’s important to recognise that performance issues can arise at any time after the system goes live.

Performance management is not a one-time concern; it is an integral part of the software development lifecycle and must be considered in any serious development program. Introducing performance management early in the program— ideally before it starts — significantly reduces the likelihood of encountering performance issues later on.

Within the context of software development, performance management involves the continuous monitoring and improvement of various aspects of software performance throughout its lifecycle. This white paper explores why performance management is a critical, ongoing process and not merely a phase in the software project lifecycle.

3- Performance Management in Software development

Performance management in software projects encompasses the establishment and monitoring of performance metrics and benchmarks, aimed at ensuring and enhancing the functional and operational aspects of the software. Key concepts include efficiency, responsiveness, scalability, and reliability, which are vital for the success of any software application.

- System Efficiency: System efficiency refers to the ability of a software system to perform its functions accurately and quickly, while utilising minimal resources such as CPU, memory, and disk space. Efficient software systems execute tasks in a timely manner without wasting resources. This involves optimizing algorithms, reducing unnecessary computations, and minimizing resource consumption to ensure optimal performance.

- Responsiveness: Responsiveness pertains to how quickly a software system reacts to user input or external events. A responsive system provides timely feedback to user actions, ensuring that users do not experience significant delays or lags when interacting with the application. This involves designing interfaces and processing logic to prioritize user interactions and minimize latency, ensuring a smooth and interactive user experience.

- Scalability: Scalability refers to the ability of a software system to handle increasing workload or user demand without compromising performance or functionality. A scalable system can efficiently accommodate growing data volumes, user traffic, or concurrent users by gracefully expanding resources or distributing workload across multiple servers or resources. This involves designing architecture and components in a way that allows for easy horizontal or vertical scaling as needed.

- Reliability: Reliability denotes the ability of a software system to consistently perform its intended functions accurately and predictably, without failures or errors. A reliable system operates as expected under normal conditions and gracefully handles exceptions or failures, ensuring uninterrupted service for users. This involves implementing robust error handling, fault tolerance mechanisms, and rigorous testing practices to identify and mitigate potential failures or issues that could impact system reliability.

4- The lifecycle of software projects

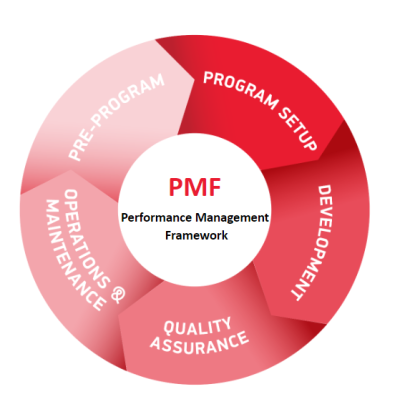

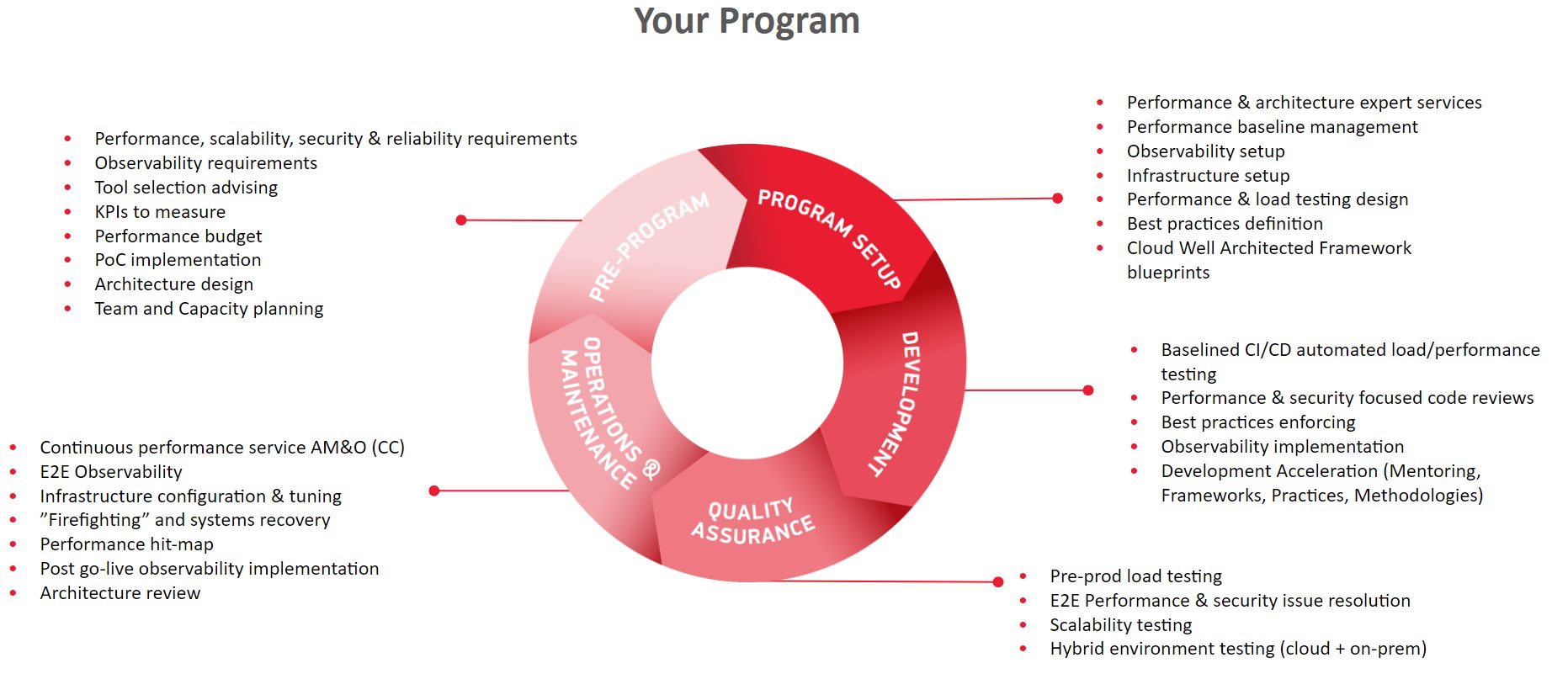

From a performance standpoint, a software development lifecycle (SDLC) includes several phases: Pre-Program (planning), Program setup (when the program starts), Development, Quality Assurance, Operations & Maintenance. Each of these phases has distinct objectives and activities, but they must all consider performance management to ensure the success and sustainability of the software project.

- Pre-Program: Planning with realistic and measurable performance goals is crucial. This phase involves defining key performance indicators (KPIs) and benchmarks that align with project objectives.

- Program setup: The system architecture and design should facilitate the achievement of performance goals. This phase should focus on designing for scalability, efficiency, and reliability.

- Development: Developers should write optimized code with performance in mind, employing practices that enhance performance and resource utilization.

- Quality assurance: Performance testing is critical to identify and address bottlenecks. This phase ensures that the software meets the set performance criteria under various conditions, including load and performance testing both for individual components and end-to-end (from the user’s perspective).

- Operations: Monitoring and managing the performance in a real-world setting is crucial for understanding the software’s behavior under different load conditions.

- Maintenance: Ongoing performance evaluation and enhancements are necessary to adapt to changing user needs and technological advancements.

5- Integrating Performance Management

As part of its performance focused business model, Crossjoin has developed an integrated Performance Management Framework that covers all the required activities for a successful program, from a performance perspective.

Pre-Program

Before a program starts, it’s crucial to ensure that the program’s functional and technical objectives are aligned with its performance goals. This alignment should be considered not only from a technical architecture perspective but also from budgetary, monitoring, and observability standpoints:

- Performance, Scalability, Security & Reliability Requirements: Identifying key requirements for performance, scalability, security, and reliability is fundamental for a successful program. These requirements can be incorporated into the program’s RFP (Request for Proposal) or, for in-house development, into the technical architecture and hardware specifications. The primary benefit of establishing these requirements upfront is to encourage all stakeholders to consider the system’s performance envelope in all program activities. For example, should the CI/CD pipeline include regular load and performance tests? What are the budget implications? Who is responsible for developing these tests, what data will be used, what hardware and tools are required, …?

- Observability Requirements: True end-to-end performance understanding comes from comprehending the performance of each system component, including hardware metrics, database behavior, component APIs, workflows, microservices, apps, and front-ends. These components, often a mix of off-the-shelf and custom developments, require end-to-end monitoring for full observability, achievable through market software or in-house development, often representing a project in its own right.

- Tool Selection: Choosing foundational tools for the program must account for performance requirements. Often, selected tools may have inherent limitations in throughput, design, or architecture, or may not have been tested against the program’s processing requirements. These foundational choices must be fit for purpose.

- KPIs to Measure: Performance KPIs define what ‘done’ looks like for the performance aspect of the program. They enable objective discussions around performance with vendors and internal teams by allowing them to test software against these KPIs.

- Performance Budget: Performance-related activities need dedicated budgeting. If performance considerations are secondary, this aspect of the budget will likely be insufficient, potentially necessitating additional funds for program completion. Budget considerations should cover the performance team, hardware environment for performance testing, observability projects, etc.

- PoC Implementation: For many programs, specific Proofs of Concept (PoCs) are necessary to verify that selected tools and architectures can meet performance targets. This validation should occur before tool selection and development commencement.

- Architecture Design: A specific area of architecture design should focus on performance, addressing how the technical architecture will meet performance, scalability, security, and reliability requirements beyond just functional needs.

- Team and Capacity Planning: Incorporating performance “by design” requires careful planning and budgeting for design, development, testing, and operation within the system’s maximum performance envelope.

Program setup

During the initial stages of the program, key activities such as establishing a performance baseline, defining best practices, designing, and implementing the systems architecture with performance in mind, setting up observability and infrastructure, designing performance and load testing, and creating the correct blueprint for cloud services utilization are crucial.

From a performance standpoint, these activities demand expert knowledge and tools for success. It is highly unlikely that an in-house team or a generalist vendor will possess the necessary skills and expertise. Therefore, adopting a complementary approach with expert services from a trusted provider, such as Crossjoin, is generally considered the best strategy

Development

During the development stage there are several key activities required to make sure the end software product performs according to expectations:

- Auditing & mentoring: an external party working with the team to ensure code reviews, enforce best practices and provide expert guidance on methodology, framework usage, and coding best practices for performance amp; security.

- Observability implementation: working in tandem with the program development team to make sure observability requirements are met and tested together with the remaining program deliverables.

- Program automation: performance and load testing and automate their execution on the CI/CD pipeline.

Quality Assurance

During this stage, a series of activities are dedicated to ensuring that performance requirements and KPIs are met. The initial focus of the performance and load testing activities is to establish a known correlation between the hardware, system configuration, data set, and load in the testing environment and the production environment to ensure that testing is representative. Once this correlation is achieved, a series of load testing runs simulating different usage profiles for the system are performed, and the results are compared with the agreed-upon KPIs and performance requirements.

After the test results are available, a specific, performance-focused methodology is applied to identify potential bottlenecks and issues, along with recommendations for resolving these problems. The performance team collaborates closely with the program team to ensure that all identified problem areas are addressed.

Operations & Maintenance

After the program goes live there are several key activities that are part of the on-going performance management activities. For large programs, it makes sense to have a continuous performance service that works together with the regular operations team and performs L3 activities to:

- Performance early warning: create a set of automations and dashboards to automatically identify and resolve potential performance problems before they happen. Crossjoin has identified many patterns that arise before a problem becomes a major production incident. Our application support and maintenance teams, together with our competence center can help diagnose and fix many of these problems before they are perceptible by the customers.

- Firefighting and systems recovery: once the problem is established, our application support and maintenance teams can help diagnose and recommend a solution for any end-to-end performance problem.

Most traditional support and operations teams have a segmented/partial view of the problem – the DBA looks at databases, the network admin at networks, the application support team at application problems. What is lacking in this view, is the ability to look at problems from an end-to-end perspective and diagnose problems without losing this end-to-end mindset. By having a team focused on end-to-end performance, there is a focus on accountability and problem resolution and less on finger-pointing and “not my problem” syndrome.

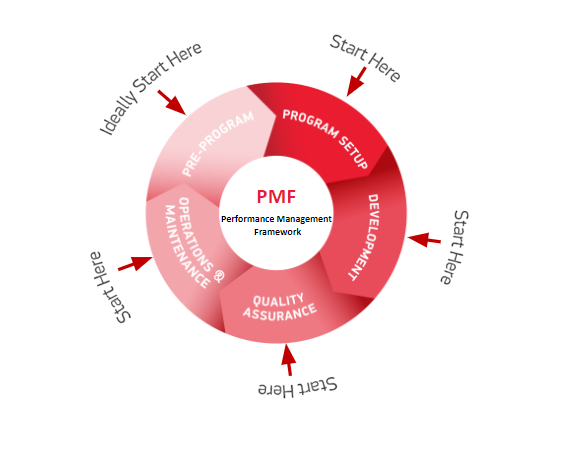

6- Where to start?

At Crossjoin, most of our customer engagements start at the operations and maintenance stage. This is quite understandable, since at this stage performance problems are no longer a risk, and they quite often materialize as an issue that requires urgent attention by a firefighting team.

It is also very common, that after the scare, we establish long-term relationships with our customers where we play the role of making sure the system behaves as expected on a more permanent basis.

7- Assessing performance maturity

For customers focusing on end-to-end performance, it is very important to assess the current maturity of its existing processes. At Crossjoin we development a Performance Management Framework compliance service that enables customers to benchmark their maturity level against best-practice.

8- Conclusion

End-to-end performance management results in higher quality software, increased customer satisfaction, cost efficiency, and a stronger competitive edge. It challenges the misconception that performance is only a concern during the later stages of development by underscoring its significance throughout the project lifecycle.

Performance management is a critical and ongoing process in software development, extending well beyond mere testing and deployment. Integrating performance management throughout the software development lifecycle is crucial for delivering software products that are not only high in quality but also efficient and reliable.

Jorge Rodrigues

Associate Partner

How has traditional performance management in large software projects typically been handled? Visit us Sistem Informasi Akuntansi